MATT and its software suit were designed to reproduce, as accurately as possible, human interaction with a device, leading to effectiveness in recognizing any on- screen elements, button pressing independent of their position on the device, and the executions of more complex gestures such as pinch, swipe, rotate. As a result, testcases can be written using only visual elements, making the test flow independent of hardware or software. What simplifies MATT’s use even more is the advantage of writing a test for a device (e.g. android/ ios phone or tablet) and being possible to use the same test on other DUTs without the need of updating the testcase or the icons.

MATT’s computer vision API is resilient to noise caused by environmental factors (e.g. variations coming from artificial light or sunlight), and to small changes (that might appear from an OS to another) of an icon’s dimension, rotation, position or shape. If the testcase requires, custom AI elements can be added. An example of such situation is detailed bellow:

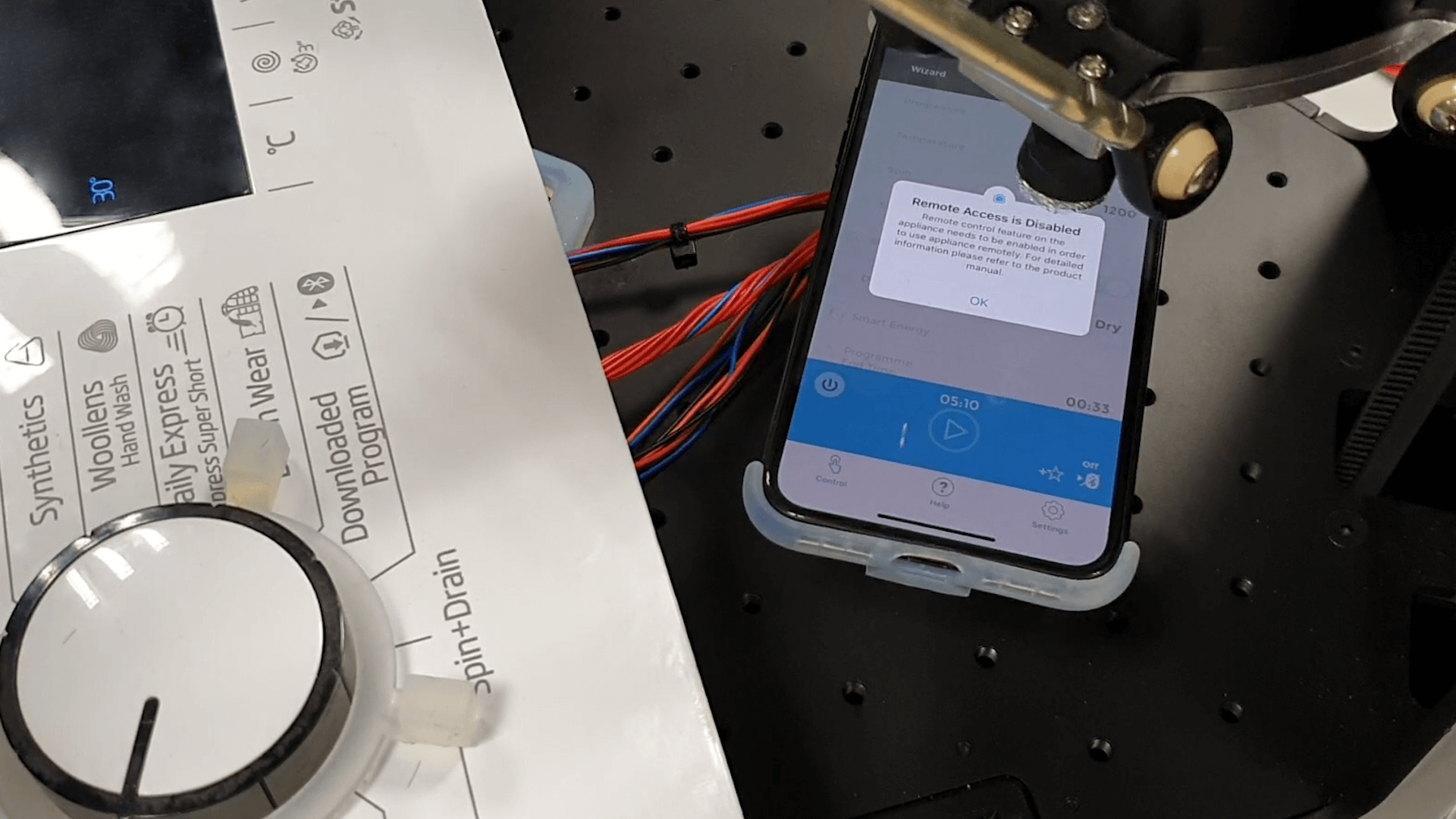

In the scenario of MATT performing in a 96h testing cycle, different warnings appeared on the screen due to OS notification (low battery) or pop-ups generated by apps, which interfered with the test flow. To continue its testing cycle, MATT first needed to dismiss the pop- ups, step implemented through an object detection neural network trained on more 20.000 images with pop-ups generated by different application. By adding OCR to the detected pop-up window, key dismiss buttons (“Ok”, “Agree”, “Dismiss”, “Not now”, “Later”, “Cancel”, “Clear” etc.) were identified, tapped on and the test cycle proceeded.